"If some one say: "You divide ten into two parts: multiply the one by itself; it will be equal to the other taken eighty-one times." Computation: You say, ten less thing, multiplied by itself, is a hundred plus a square less twenty things, and this is equal to eighty-one things. Separate the twenty things from a hundred and a square, and add them to eighty-one. It will then be a hundred plus a square, which is equal to a hundred and one roots. Halve the roots; the moiety is fifty and a half. Multiply this by itself, it is two thousand five hundred and fifty and a quarter. Subtract from this one hundred; the remainder is two thousand four hundred and fifty and a quarter. Extract the root from this; it is forty-nine and a half. Subtract this from the moiety of the roots, which is fifty and a half. There remains one, and this is one of the two parts."

~ Muḥammad ibn Mūsā al-Khwārizmī (Source: Wikipedia)

The tools for doing algebra have evolved over the years. When Muḥammad ibn Mūsā al-Khwārizmī was working on algebra, he did all of his work in words (see above). The symbols we have invented are a different tool we use for solving algebra problems. The fundamental structure of algebra is therefore something different than either of these tools.

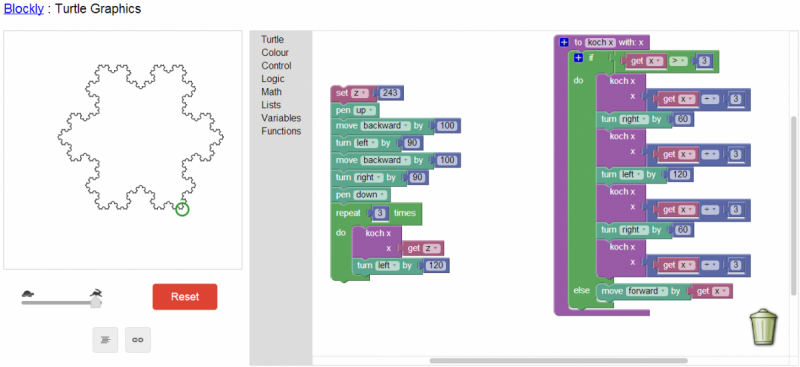

Can we do algebra with a computer (which is today’s new tool for doing algebra) and preserve the underlying qualities that are algebra? How does access to a computer, and knowledge of programming, change what we can do with algebra?